I’m considering using WriteHuman AI for writing and editing, but I’ve seen mixed opinions online and can’t tell what’s real or sponsored. Can anyone share a genuine WriteHuman AI review, including pros, cons, pricing value, and how it compares to other AI writing tools so I don’t waste time or money?

WriteHuman AI review from someone who burned a few credits on it

WriteHuman is one of those “humanizer” tools that keeps getting mentioned, so I bought a plan and pushed it a bit. Short version: I would not rely on it for anything important.

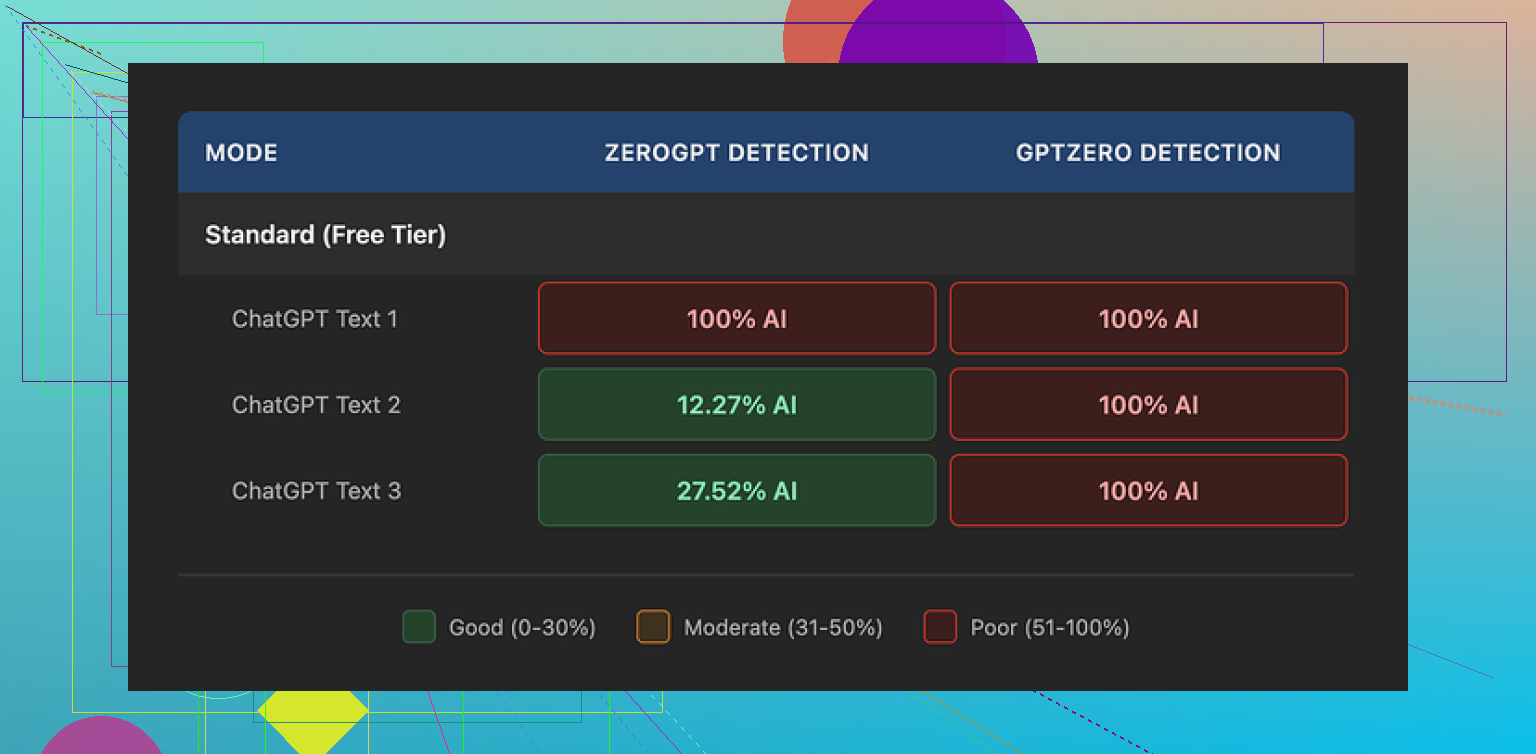

How it did against detectors

Their marketing name-drops GPTZero, so I started there.

I took three different AI-written samples, ran them through WriteHuman, then dropped the outputs straight into GPTZero.

Results:

• Sample 1: 100% AI

• Sample 2: 100% AI

• Sample 3: 100% AI

So, for me, it failed every time on the detector they reference in their promo copy.

Then I tried ZeroGPT with the same three WriteHuman outputs:

• Sample 1: 100% AI

• Sample 2: ~12% AI

• Sample 3: ~28% AI

ZeroGPT felt random more than “better”. One text was flagged hard, the others not so much. Nothing that looked like a stable pattern I would trust for work or school.

How the writing looked

This part surprised me more than the detector scores.

The “humanized” text had:

• Sudden tone jumps, like switching voice mid-paragraph

• One straight typo: “shfits” instead of “shifts”

• Some awkward phrasing that felt forced, like it was trying to be messy on purpose

Those glitches might help trip up some detectors, but they make the text worse for real use. If you are writing for clients, teachers, or editors, they will notice. It reads like an AI trying to fake being tired.

Screenshot from my runs:

Pricing and terms

This part pushed me off the fence.

Cheapest paid option when I tried it:

• Basic plan: $12 per month if you pay annually

• 80 requests on that plan

All paid tiers unlock an “Enhanced Model” and more tone options. I did not see enough improvement to feel good about the price.

Two bigger problems sit in the terms:

- They openly say they do not guarantee bypass of any detector.

- They have a strict no-refund policy.

So if it fails your use case, you just eat the loss.

Another detail that will bother some people:

• Text you send in is licensed for AI training

If you do not want your content used that way, you need to skip the tool altogether. There is no opt-out inside the product from what I saw.

What I ended up using instead

For comparison, I tried Clever AI Humanizer from here:

From my testing:

• It did better on detector scores

• No paywall blocking basic use when I tried it

• Output felt closer to something a rushed human might write, without the weird hard tone swings

Not perfect, but it did a better job at the specific thing I needed, which was lowering detection risk without destroying readability.

Who WriteHuman might still fit

If you:

• Do not mind your text being used to train models

• Are okay with inconsistent detector results

• Want extra tone knobs to play with and do not care about the cost

then it might still be worth testing with small chunks of low-risk text.

For anything high stakes, after running three separate samples and paying for a plan, I would not lean on it.

I tested WriteHuman for a month for client content and grad school stuff. Mixed bag.

What I agree with from @mikeappsreviewer

The detector part is shaky. I saw the same thing on GPTZero. WriteHuman output still showed as high AI most of the time. On ZeroGPT and a couple of smaller detectors, it passed sometimes, failed other times. No pattern you can rely on.

Where my experience was a bit different

I found the writing quality slightly better than what Mike described. The tone shifts were there, but if you already write decently and only run short chunks, the output looked ok after a quick manual edit. If you expect push button “safe” text, it falls short fast.

Pros I saw

• Interface is simple, no clutter.

• Multiple tones help if you want to roughen AI text so it feels less “ChatGPT”.

• For light rewriting of emails or short posts, it can save a bit of time.

Cons

• AI detectors stay unpredictable. It does not “solve” detection for school or high risk use.

• Pricing feels steep for what you get. My plan was around what Mike mentioned and I burned through credits quickly.

• No refunds plus no guarantee. You carry all the risk.

• Terms say your text gets used for training. Bad if you handle client sensitive stuff.

Pricing value

If you write a lot and need consistent output, the cost per usable piece gets high. I ended up using it only on low importance content because I had to edit too much.

Where it fits

• Social posts.

• Rough blog drafts that you will heavily edit.

• People who do not care about detectors and only want to break the “ChatGPT sound”.

Where I would avoid it

• Academic work with strict AI rules.

• Legal, medical, or client confidential writing.

• Anyone on a tight budget.

Alternatives

Clever AI Humanizer worked better for me when the goal was lower AI detection without wrecking readability. The detector scores were more often in the “mixed” or “human-like” range and the text felt more natural. Still not magic, but closer to what you want if you worry about AI flags.

If your main goal is honest editing and improving your writing, you might be better off with a solid editor tool or a general LLM plus your own revisions. If your main goal is to “beat detectors”, no tool is giving you a sure thing right now, WriteHuman included.

I’m somewhere in-between @mikeappsreviewer and @techchizkid on this.

Used WriteHuman for about 3 weeks for client blog drafts + some “please don’t scream AI” emails. My take:

How it actually feels to use

- UI: simple, almost boring, which is a plus. Paste text, pick tone, hit go. No learning curve.

- Speed: reasonably fast. No big complaints there.

- Workflow: It’s fine if you treat it like a rough rewriter, not a magic “humanizer.”

Quality of the writing

Here’s where I part-ways a bit with @mikeappsreviewer:

- I didn’t see the wild typos every time, but I did see that weird “I’m pretending to be human” wobble.

- It has this habit of injecting random informal phrases that don’t fit the rest of the paragraph. Kind of like someone edited only every third sentence.

- If your base writing is already decent and you only humanize short sections, you can clean it up pretty fast. Long-form stuff gets messy and you’ll waste time fixing tone inconsistencies.

If you’re hoping it’ll turn stiff AI text into natural human prose with one click, that’s where it flops. You still have to edit a lot.

Detectors & risk

I’m aligned with both of them here:

- It does not reliably “beat” GPTZero or ZeroGPT. Sometimes scores look better, sometimes they don’t.

- Anyone using this for high-stakes academic work or corporate compliance is playing roulette.

- Their own terms say they don’t guarantee bypass. Believe them on that part.

If your main goal is “I must not trigger AI detectors,” then no, WriteHuman is not a safe bet. Honestly, nothing is 100% here.

Pricing vs value

Roughly in the same ballpark on pricing as what’s already mentioned:

- For what it does, it’s on the expensive side, especially with credit limits.

- You burn credits fast if you work with long articles.

- No-refund + no guarantee is the worst combo. If it doesn’t fit your use case, too bad.

Where I slightly disagree with the doom & gloom:

If you’re only doing occasional short pieces (emails, product blurbs, LinkedIn posts), the cost is tolerable. For heavy users or students on a budget, it’s a bad deal.

Privacy & terms

This is the real red flag for me:

- Your text being used for training means anything sensitive is off the table: client docs, internal reports, drafts of proprietary content, etc.

- No opt-out is a dealbreaker in certain industries. If you do agency / freelance work, that alone can disqualify it.

Where WriteHuman actually works

Decent fit for:

- Social posts where tone matters more than “purity” of writing.

- Roughing up content that sounds too ChatGPT-like, if you’re going to edit afterward anyway.

- People who don’t care about detectors and just want a slightly different “voice.”

I would not touch it for:

- Academic writing with strict AI policies.

- Anything under NDA or involving sensitive info.

- Client work where you’re paid for polish and consistency.

Alternatives & what I’d actually do

If you’re specifically chasing lower AI detection and readability, I’d look at Clever AI Humanizer. My experience was pretty similar to what’s already been shared:

- More natural flow, fewer awkward “fake human” glitches.

- Detector scores were more often in that mixed/uncertain zone. Still not a guarantee, but less embarrassing than “100% AI” every time.

- For SEO content and blog stuff, Clever AI Humanizer felt more usable out-of-the-box.

Side note: if your goal is honest improvement and clarity, a normal LLM plus your own editing is still better than a dedicated “humanizer” most of the time. Humanizers are basically niche tools for a problem no one can actually solve reliably yet.

Bottom line

- WriteHuman is a “nice to toy with” tool, not something I’d build a workflow or career around.

- Mixed writing quality, inconsistent detector results, and touchy terms of service make it a medium-risk, medium-reward option.

- If you try it, start with low-stakes, short text and assume you’ll be hand-editing a lot anyway.

- If you care about natural output + some help with detection risk, Clever AI Humanizer is worth testing alongside it and comparing on your own samples.

Short version: WriteHuman is usable for light “roughening” of AI text, but it’s not good value if your goal is safety, consistency or serious writing.

What I see the same as @techchizkid / @suenodelbosque / @mikeappsreviewer

- Detectors: Totally inconsistent. Sometimes scores drop, sometimes not, and GPTZero in particular still screams “AI.” If your use case lives or dies on detection, this is gambling, not strategy.

- Pricing: For a capped, credits-based tool that still needs heavy editing, the cost per solid piece is high, especially if you do long-form.

- Terms & privacy: Using user content for training with no real opt-out rules it out instantly for confidential or client-bound material. Their no-refund + no-guarantee combo is pretty hostile to users.

Where I slightly disagree

- Writing quality: I’d rank it between the others. On short pieces like emails, it was fine after edits. On longer essays, the tonal drift and random informal inserts felt worse than what some people here described. To me it reads like pasted-together partial edits, and that actually increased my editing time.

- “Use it for low-stakes content”: I’m a bit harsher here. Even for casual blog drafts, I got annoyed fixing the jerkiness in voice. In many cases, running a normal LLM and then doing a quick pass myself was faster than cleaning up WriteHuman’s “fake imperfections.”

Where WriteHuman can make sense

- Short social posts where you just want to break that ultra-smooth ChatGPT vibe.

- Internal or personal notes where you do not care about detectors or long-term consistency.

- People who like fiddling with tone knobs for experimentation, not production.

I would skip it for

- Any academic setting with explicit AI policies. Tools like this cannot give you the certainty those policies expect.

- Client-facing content that must be polished, consistent, and on-brand. The tonal swings are very noticeable.

- Anyone sensitive about data use, NDAs, or proprietary drafts.

On Clever AI Humanizer

A few folks already mentioned it, and I land closer to the “cautious thumbs up” camp:

Pros

- Output usually reads more like a rushed but real person, with fewer jarring tone shifts.

- Detector scores, in my tests, more often landed in “mixed/uncertain” territory rather than screaming 100 percent AI. Still not guaranteed, but less embarrassing.

- Better out-of-the-box flow for blog-style content, so you spend more time tweaking ideas and less time fixing forced “human-like” glitches.

Cons

- Still not a magic AI detector shield. If you absolutely must avoid flags, you are still in the gray zone.

- You will still need to edit, especially for structure, factual accuracy, and voice alignment.

- If they ever go hard on paywalls or strict credit caps, the same value problem WriteHuman has will show up.

Compared to what @techchizkid, @suenodelbosque and @mikeappsreviewer wrote, I’m slightly less forgiving of WriteHuman as a daily tool and a bit more willing to lean on Clever AI Humanizer as a helper for casual content and SEO-style articles. But in both cases, I would treat them as “assistive rewriters,” not as a way to bypass rules or to skip doing your own editing.

If your main aim is genuinely better writing, you are still better off with a capable general LLM plus your own judgment. Tools branded as “humanizers” solve a very narrow slice of the problem and, right now, none of them erase the risks tied to AI detection.