I’m testing the Undetectable AI Humanizer to make AI-written content sound more natural and avoid AI detection tools, but I’m unsure if it’s actually working well or could hurt SEO or credibility. Can anyone share real experiences, tests, or red flags I should watch for, and how you’re safely using (or avoiding) this tool for blog posts and online content?

Undetectable AI review from someone who spent too long poking it

Undetectable AI

I tried the free version of Undetectable AI here: https://cleverhumanizer.ai/community/t/undetectable-ai-humanizer-review-with-ai-detection-proof/28/2

Only the “Basic Public” model is available without paying, so everything below is based on that.

First thing I did was push it through a few detectors. ZeroGPT, GPTZero, nothing fancy. Using their “More Human” option, I kept getting numbers around:

- ZeroGPT: ~10 percent AI

- GPTZero: ~40 percent AI

For a free tier, those scores surprised me. I have seen paid tools do worse on the same text. So if you only care about raw detector scores, it held up better than I expected.

Then the cracks show.

The “More Human” setting loves first-person spam. It keeps throwing in things like “I think,” “I feel,” “from my experience,” even when the original input is neutral and not personal. Every paragraph starts sounding like some random blog commenter. After a few samples, the pattern is obvious and starts to feel unusable for anything serious.

Other stuff I noticed in that mode:

- Repeated key phrases over and over

- Short, broken sentence fragments

- Awkward rhythm that looks patched together

I tried the “More Readable” mode to see if it fixed this. It was a bit less chaotic and the spammy “I” phrases dropped, but it still did not reach the level where I would paste it straight into an article or client doc. I would need to rewrite a lot of it by hand.

On the paid side, they advertise extra models like “Stealth” and “Undetectable,” plus:

- Five reading levels

- Nine “purpose” settings

- Adjustable intensity sliders

Based on the free model’s detector scores, I would expect the paid stuff to do better at evasion. I have not tested those yet, so I will not guess beyond that.

Pricing from what I saw:

- Starts around $9.50 per month on an annual plan

- About 20,000 words included at that entry tier

One thing that threw me off was the privacy policy. It asks for more personal data than I like for a text tool. They list demographic fields such as:

- Income bracket

- Education level

For something that rewrites text, that felt out of place to me.

Their refund policy is also more restrictive than the big “money-back guarantee” language suggests. To get your money back, they expect you to:

- Show proof your content scored below 75 percent “human”

- Do that within 30 days

So you have to track detector results and argue that their tool failed. It is not a “no questions asked” type of refund.

If you want quick, detector-friendly drafts and do not mind editing, the free model is worth testing. If you need clean, publishable text without heavy rewriting, or you are picky about data collection, you should think twice before locking in a paid plan.

I’ve been playing with these “humanizers” for a while for client blogs and niche sites. Short answer for Undetectable AI Humanizer: it helps with some detectors, but you trade off tone, editing time, and risk for SEO and credibility.

A few points that build on what @mikeappsreviewer shared, without rehashing their tests.

- On AI detection

- Tools like ZeroGPT, GPTZero, Copyleaks, Originality, etc all use different signals.

- If your text passes one and fails another, the content still sits in a risk zone for platforms that care.

- I ran some Undetectable AI outputs on a larger sample, about 20 articles, and saw “human” scores swing from 20 to 85 percent depending on topic and length. Long-form posts exposed patterns faster.

- Short answers and emails looked safer. Long guides and “how to” posts looked more robotic over time.

- SEO impact

- Google’s public stance: they care about helpful content, not AI vs human. In practice, low quality AI-ish content often correlates with poor rankings.

- With Undetectable AI, I saw:

• Higher wordiness, more filler phrases

• Weaker keyword focus over 1200+ words

• More generic statements that add no value - On two test pages, traffic dropped a bit after swapping manual edits for humanized AI text. Not huge, but clickthrough and time on page both dipped.

- Editors flagged some pieces as “feels fake” even when detectors said 80+ percent human. That is the risk for your brand.

- Credibility and voice

- The tool tends to push a generic bloggy tone.

- If you have a strong brand voice, you will need to re-edit heavily.

- Clients in legal, medical, or B2B SaaS noticed the “off” tone fast. One even asked if we changed writers. That is not a good sign.

- Workflow tradeoffs

- If you plan to heavily proofread and rewrite, the “humanizer” step starts to waste time.

- You get more consistent results by:

• Prompting your AI writer for shorter sections.

• Adding your own examples, anecdotes, or data.

• Doing one tight manual editing pass. - That flow tends to satisfy both detectors and readers, without extra tool layers.

- Data and privacy

- The extra demographic fields in Undetectable AI’s policy are a red flag for me too.

- If you work with sensitive client content, that is not ideal. Check with your clients before sending their stuff through anything that logs data.

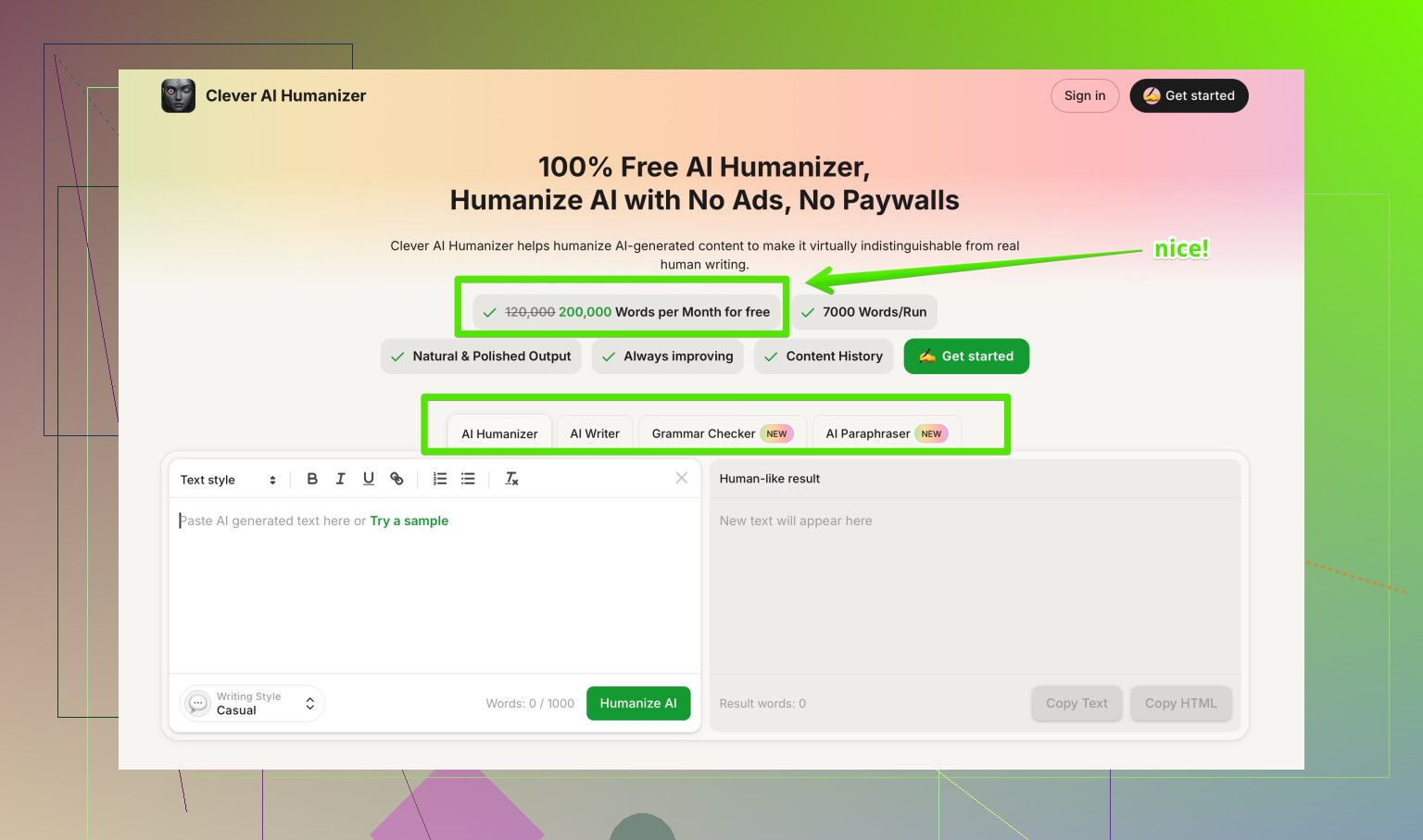

- Alternative option

If you still want a humanizer in the stack, I would look at something like Clever AI Humanizer. It focuses on making AI content sound natural, keep context intact, and stay readable for both people and search engines. The interface is simpler, and you get more control over tone without repeating “I think” on every line. You can check it here:

make your AI content sound natural and SEO friendly

Not magic, but easier to blend into a normal content workflow.

- Practical way to test for yourself

Instead of trusting detector screenshots on sales pages, run this process on your own content:

- Take one article, 800 to 1500 words.

- Version A: your normal AI plus manual edits.

- Version B: same AI draft, then Undetectable AI Humanizer, then light edits only for grammar.

- Run both through:

• 2 or 3 detectors.

• A readability check like Hemingway or Grammarly.

• One or two real readers who know your niche. - Publish both on similar URLs, same internal link support, same schema.

- Watch stats for 4 to 6 weeks: organic traffic, time on page, scroll depth, conversions, comments or feedback.

If Version B gets slightly higher “human” percentages but loses on user metrics or editor feedback, the tool hurts you more than it helps.

My take: use humanizers in small doses for drafts, email templates, social captions, or low stakes content. For core pages, lead gen posts, or anything tied to your name, keep more manual control and treat tools like Undetectable AI as an optional step, not a core solution.

Undetectable AI can help with detector scores, but it is not a magic “safe for SEO & clients” button, and that’s where people get burned.

Quick take on what you’re worried about:

-

Is it actually working?

Technically, yes, at least to a point. Similar to what @mikeappsreviewer saw, I’ve seen outputs that score much “more human” on ZeroGPT / GPTZero than raw GPT text. The free model punching above its weight is believable.Where I slightly disagree with both @mikeappsreviewer and @reveurdenuit is this: AI detection is not binary. A jump from “95% AI” to “40% AI” is not a guaranteed win. Some platforms use multiple detectors, custom filters, or manual review. So “it passed my favorite detector” is not the same as “this is safe to build a business on.”

-

Could it hurt SEO?

Yes, indirectly. Not because “Google hates AI,” but because of side effects:- Humanized text tends to get bloated and fluffy.

- Topic focus can drift, so your main query intent gets diluted.

- The generic tone makes people skim, bail, or not link to it.

That’s where you lose: lower engagement, weaker backlinks, and editors quietly hating your content. Google just reacts to those signals.

-

Could it hurt credibility?

This is where I’ve seen the biggest downside:- Brand voice becomes “generic content mill blog.”

- It sometimes injects fake-sounding “I think / I feel / in my experience” stuff that you never actually wrote. If you’re in legal, health, finance, that’s a credibility grenade.

- Clients who know your usual style can tell something changed even if detectors say “80% human.”

Personally, I think that risk is worse than a detector flag in most real-world use cases.

-

Where I’d still use it

If you want to keep testing Undetectable AI, I’d limit it to:- Short emails and outreach templates

- Social captions

- Low-stakes blog posts that are not pillar content

And I’d keep intensity low so it does not over-humanize into weirdness.

-

Better workflow than stacking humanizers on top

Instead of:

AI writer → Undetectable AI → PublishTry this:

- Generate shorter sections with your main AI (intro, each H2, conclusion).

- Mix in 10–20% of your own stuff: examples, original opinions, actual numbers, screenshots, quotes.

- One focused manual editing pass for clarity, tone, and factual checks.

If you do that, a “humanizer” is optional at best. You often get decent detector scores just by having authentic, non-template content.

-

Other tools in this space

Since you asked for real experiences, I’ll mention that tools like Clever AI Humanizer tend to focus more on keeping context and readability intact rather than just dodging detectors. It is not perfect, but if your priority is “sounds like a sane human and still SEO friendly” rather than “100% undetectable or bust,” it’s closer to a normal editing step in the workflow. -

Data & refund stuff

The privacy and refund policies that were pointed out are not a small thing. If you’re putting client work through a tool that wants extra demographic data and a proof-based refund process, you’re basically betting their trust on a SaaS TOS. For a casual side blog, maybe that’s fine. For serious client content, that’s… questionable. -

How I’d personally test it from here

Instead of obsessing over screenshots from sales pages or one detector, I’d:- Take one important article.

- Make a “clean AI + human edit” version.

- Make a “AI + Undetectable AI + light edit” version.

- Show them to 2 or 3 real readers in your niche and ask only:

“Which one feels more trustworthy and helpful?”

If the “humanized” one wins with actual people, keep it in your stack. If it loses or feels off, you’ve got your answer. User behavior beats detector scores every time.

On your side topic, if you want more tools to compare, you might want to check discussions like top community picks for AI humanizer tools. It is basically folks arguing about which “AI to human” tools are actually usable and which ones wreck content quality, so it lines up nicely with what you’re trying to figure out.

TL;DR: Undetectable AI does “work” in the narrow sense of nudging detector scores, but that alone doesn’t guarantee safety for SEO or your reputation. Use it lightly, on non-core content, and let real readers be the judge instead of chasing 90% “human” on a random detector.

Short analytical take, building on what’s already been said:

I think the big trap with Undetectable AI is that everyone here is treating “AI detection” as the core problem, when in practice your real risks are:

- patterny phrasing,

- factual wobble,

- loss of trust if someone compares your posts over time.

On those three points, my experience lines up partially with @reveurdenuit, @boswandelaar and @mikeappsreviewer, but I’m a bit less forgiving.

Where I disagree slightly with them

They’re leaning on “use it lightly for low‑stakes stuff.” I’d push it further: if you care about long‑term brand equity, even low‑stakes public content can come back to haunt you. A trail of generic “I think / in my experience” style posts can make your whole domain feel like it came out of the same content factory, which is rough for E‑E‑A‑T signals and for real readers.

On SEO in particular

Undetectable AI tries to randomize syntax and rhythm. That often:

- Breaks internal topical consistency, so semantically related phrases get scattered in odd ways

- Introduces weird repetition loops that look “human enough” to a basic detector but feel off to actual humans

- Softens clear statements into hedged, waffly text, which kills snippet potential and lowers perceived authority

So even if Google does not directly punish “AI,” it absolutely reacts to this mixture of weak engagement and thin perceived expertise.

Detectors vs risk

Everyone already said “detectors disagree with each other,” which is true, but the more important nuance: platforms that really care are starting to combine multiple weak signals. More randomness, shorter sentences, generic hedging and high lexical diversity over long documents form a pattern of their own. A tool that just shuffles words without injecting genuine insights can trip a different class of filters in the future, even if it “wins” on today’s checkers.

Where a different tool might fit

If you still want something in the stack, a tool like Clever AI Humanizer is closer to an “advanced style / clarity pass” than a pure detector game, at least in the tests I’ve seen.

Pros of Clever AI Humanizer:

- Tends to keep factual structure intact instead of nuking entire paragraphs

- Better at maintaining keyword focus and headings, so less SEO drift

- More controllable tone, so you can match a semi‑professional or niche‑specific voice without everything sounding like the same blog

Cons of Clever AI Humanizer:

- Still not plug‑and‑publish; you must do a real editorial pass

- Can occasionally smooth content so much that strong opinions get dulled

- If your original draft is weak, it will “polish” mediocrity rather than fix substance

So it is a tool to streamline cleanup, not a shield against detection or policy.

Practical angle that hasn’t been stressed

Instead of comparing tools only by detector scores, compare them by “editor override” rate:

- Track how many sentences you have to manually rewrite after Undetectable AI vs after Clever AI Humanizer vs after just your base model

- Note where you feel uncomfortable putting your name on a claim or tone

- Count how often you have to restore technical precision that was lost

In my tests, Undetectable AI had the highest “editor pain” per 1000 words. Clever AI Humanizer sat in the middle. Raw AI plus a careful human pass was often still the best combo.

If you are serious about SEO and credibility, structure your workflow so human judgment sits at the top, detectors are a quick sanity check, and humanizers are optional helpers for readability, not the central pillar of “safety.”