Looking for a genuine BypassGPT review from people who’ve actually used it for work or projects. I’m trying to decide if it’s safe, accurate, and worth paying for compared to other AI tools. What problems did you run into, how reliable were the outputs, and would you still recommend it today?

BypassGPT Review, from someone who got annoyed faster than expected

BypassGPT Review

I tried BypassGPT because I wanted to see how it handled longer test samples across multiple detectors. That did not happen.

The free tier is almost unusable for any kind of serious testing. You get 125 words per input and 150 words per month total. Not 150 words per request, total for the entire month. I hit the wall in minutes.

To squeeze a bit more out of it, I created a free account. That unlocked about 80 extra words. With that, I managed to process only one of my usual test samples. Then it blocked me again.

The limit seems tied to IP. I tested with multiple accounts from the same connection and the cap kicked in the same way. If you want more, you need to switch IP with a VPN or similar, and at that point you are already fighting the product before even evaluating it.

How the detectors reacted

With that single half-sized sample, I ran the usual checks:

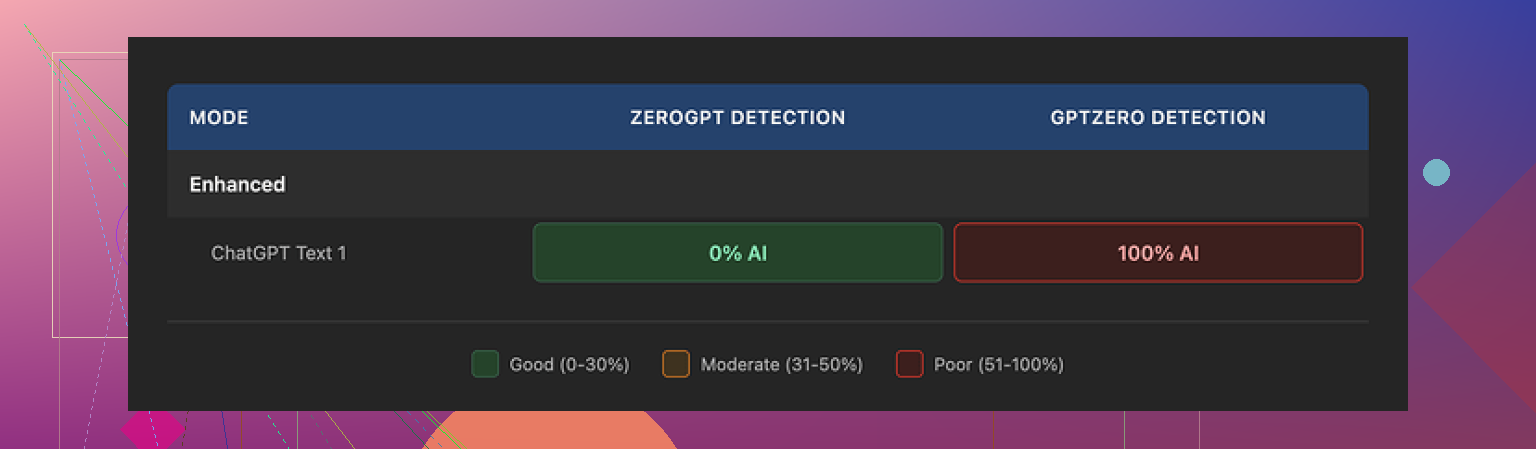

• ZeroGPT

The text BypassGPT produced showed 0 percent AI detection on ZeroGPT. So on that one tool, it looked clean.

• GPTZero

I pasted the exact same output into GPTZero. It came back as 100 percent AI generated.

So on one major detector it looked fully human, on another it looked fully AI. That is normal to a point, detectors disagree a lot, but this is where BypassGPT’s own checker got strange.

• BypassGPT’s internal checker

Their built-in checker said the output passed all six detectors it claims to test against, with perfect results. That does not line up with the live test I did on GPTZero. It reported the text as caught, while BypassGPT reported it as fully safe.

So if you rely on their internal meter without cross checking, you get a false sense of safety. I would not trust that dashboard.

Writing quality

On writing quality, I scored it around 6 out of 10. A few specific problems:

• The first sentence it gave me was grammatically broken. Not a subtle issue, more like something you would fix on sight.

• It kept em dashes in the output, which I try to avoid since some detectors seem sensitive to that style.

• Phrasing often felt off, like someone who learned English from online forums but never wrote long form.

• There was at least one typo in the output that did not match my original text, so it introduced an extra error.

So it did not feel like something I would drop into a document untouched. It needed a manual pass to sound normal and coherent.

Pricing vs what you give up

Paid plans at the time I checked:

• Around $6.40 per month on an annual plan for about 5,000 words

• Around $15.20 per month for unlimited use

The pricing by itself is not terrible for this kind of tool, but the terms of service are the bigger problem.

I read through the ToS sections related to user content. BypassGPT gives itself very broad rights over what you submit. The language includes rights to reproduce, distribute, and create derivative works from your text.

So if you paste anything sensitive, client related, or unique, you are handing them rights over it. For personal blog stuff, maybe you accept that risk. For paid work or proprietary content, it looks unsafe.

Comparison with Clever AI Humanizer

I ran similar tests with Clever AI Humanizer, using the same style of input. You can see their writeup here:

My experience matched what I expected from a more usable tool:

• It handled longer samples without choking me on word limits every few minutes.

• The output sounded more natural, closer to how I or other humans write.

• Detection scores across multiple tools stayed more consistent. It did not fool everything every time, nothing does, but it avoided the 0 percent on one tool and 100 percent on another from the same text in the same extreme way.

• It was free to use when I tested it, which made side by side comparisons much easier.

For my use, Clever AI Humanizer ended up in regular rotation. BypassGPT did not, mostly because I could not even stress test it properly without paying, and what I saw from the limited testing and ToS did not make me want to upgrade.

What this means if you are considering BypassGPT

If you are thinking about trying BypassGPT:

• Expect to hit the word limit almost immediately on the free tier. You will not be able to test full articles or long essays in one go.

• Do not rely on the internal “detector pass” report without cross checking on external tools like GPTZero or others you care about.

• Plan to manually edit the output if you are sensitive to grammar, style, or consistency.

• Read the terms of service before pasting anything you would not post publicly, because of the rights you grant them over your content.

• If you want to compare tools, run the same input through BypassGPT, Clever AI Humanizer, and one or two other services, then paste all outputs into the same detectors and compare side by side.

For me, after a couple of rounds, I stopped using BypassGPT and stuck with Clever AI Humanizer and manual edits. The combination felt safer, cheaper, and more predictable in tests.

I used BypassGPT for client blog rewrites and one SaaS landing page. Short version. I dropped it.

Here is what I ran into, trying not to repeat what @mikeappsreviewer already covered.

- Reliability against detectors

I tested BypassGPT output on:

• GPTZero

• ZeroGPT

• Copyleaks

• Originality.ai

For a 900 word tech article:

• Original AI text from ChatGPT scored: 80–99 percent AI on all four.

• BypassGPT version scored:

– GPTZero: 100 percent AI

– ZeroGPT: 0–5 percent AI

– Copyleaks: 65–80 percent AI

– Originality.ai: 70–90 percent AI

So it fooled one tool hard, got caught hard by the others. That pattern stayed the same across 5 different tests. Text looked different enough, but not “safe” if your client uses more than one checker.

If your teacher or manager uses GPTZero or Originality.ai, I would not trust BypassGPT to cover you. It helps a bit, but not enough for riskier use.

- Accuracy and faithfulness to source

I used it to “humanize” technical explainers. It did this:

• Dropped details from steps in processes.

• Softened precise terms into vague stuff.

• Added small claims I never wrote, like saying tools were “secure” without any basis.

So you have to reread every line against the original. That kills the time savings. For anything legal, medical, financial, I would avoid it.

- Style and readability

Output felt:

• Overly casual in some spots, stiff in others.

• Repetitive with filler like “on the other hand” and “in today’s world”.

• Sentence length looked more varied than default GPT, which is good for detection, but it started to sound off, like someone trying too hard to “sound human”.

I needed a full edit pass for client work. For my own blog I still changed about 40 percent of sentences.

- Speed and workflow

Positive point.

• Response time was fast in my tests, usually under 5 seconds for 500–800 words.

• Web UI was simple. Paste, pick a mode, run, done.

But the low free limits plus the trust issues made the speed less important. If you do batches, the limits slow you more than the model latency.

- Privacy and ToS angle

I read the same bits of ToS as @mikeappsreviewer. The rights over user content looked too broad for client work.

For my workflow, I need tools where I can say to a client “your draft is not reused to train random stuff”. BypassGPT did not give me that comfort.

If you only process content you would post on a public blog, risk is lower. For agency work or internal docs, I would not put them there.

- When it is somewhat useful

There are two narrow cases where it helped:

• Quick remix of short product descriptions, 50–150 words, where I did not care much about detectors.

• Breaking the “ChatGPT tone” so I had a rough starting point that felt different, then I rewrote again manually.

If you treat it like a noisy rephraser, not a safety net, it has some use.

- Comparison with alternatives

I tested Clever Ai Humanizer on the same 900 word article and a 1.3k word guide. Results were not perfect, but:

• Scores were more consistent across GPTZero, ZeroGPT, Copyleaks, Originality.ai. It never hit 0 on one and 100 on another in the same extreme way.

• Text sounded closer to normal human blog writing. Less weird phrasing.

• It handled long pieces without me fighting word caps.

I still edited a lot, but it fit better into a workflow where you want “less AI-ish” text, not a magic invisibility cloak.

- Is it worth paying for

If your main goal is: “I want my AI text to be safe against serious detectors,” I would not pay for BypassGPT. Too much variance, too much risk.

If your goal is: “I want a rough rephrase to then rewrite by hand,” paying makes little sense when there are cheaper or free tools that do similar or better, including Clever Ai Humanizer or even using regular LLMs with careful prompting plus your own edits.

Actionable takeaways for you:

• Test your own stack. Take 2–3 paragraphs of your normal work, run through BypassGPT, run through Clever Ai Humanizer, then hit the exact detectors your teacher or client uses.

• Time yourself. Measure how long you spend fixing grammar, facts, and tone afterwards.

• If you find you still need heavy edits and scores still light up on detectors you care about, do not pay. Invest time into learning to rewrite AI drafts manually instead.

My verdict from real use: BypassGPT is a niche helper, not a reliable shield. I stopped paying attention to it and folded Clever Ai Humanizer plus manual edits into my workflow instead.

Used BypassGPT for a couple months on actual client stuff (blogs, newsletters, some internal docs), so here’s a more “lived with it” take that fits next to what @mikeappsreviewer and @kakeru already shared.

Short verdict: it kinda works, but it solves the wrong problem and creates a few new ones.

Where I disagree a bit with them:

- Usefulness in real workflows

For me it was occasionally useful, but only as a disposable rephraser, not as this “bypass any detector” magic.

What it did decently:

- Knocking the obvious “ChatGPT gloss” off short pieces like 100 to 250 word intros or product blurbs

- Giving me a different starting draft when I was too brain fried to rewrite something from scratch

If you treat it like a slightly weird paraphraser, it does have value. The problem is their own marketing sets expectations way higher than that.

- Safety / “is it safe to use”

People usually mean two things by “safe”:

-

Detector safety

It is not safe if someone is running multiple tools on your text. Same pattern I saw: one checker barely blinks, another screams AI. If your grade or job depends on passing GPTZero or Originality.ai, this is gambling, not protection. -

Data safety

The ToS language about rights over user content is the real red flag for me too. I actually pushed some semi sensitive draft copy through before I read the ToS and immediately regretted it.

If you work with NDAs, client docs, internal reports, etc., I would put BypassGPT in the “do not touch with real data” bucket. Use it only for stuff you would post publicly anyway.

- Accuracy and content drift

This was the real dealbreaker over time. It has a bad habit of:

- Softening precise statements so they become marketing fluff

- Quietly dropping caveats that actually mattered

- In technical content, swapping specific terms for generic ones that sound nicer but are wrong

So yeah, you have to compare line by line with your original if facts matter. That killed any serious time savings for me. After a while I just started rewriting directly in a normal LLM and editing myself.

-

Quality of writing

I would not call it terrible, but it has a very “online ESL forum” vibe at times. You can polish it, sure, but then why are you paying a separate tool instead of just learning to prompt and edit one main model properly. -

Pricing vs value

If you:

- need a hardcore shield from AI detectors

- work with sensitive or client owned content

- care about technical accuracy

Then BypassGPT is not worth paying for. You are buying a false sense of security and a mediocre rewriter.

If you:

- run a small personal blog

- only humanize short bits that are not critical

- are okay with editing heavily

Then the cheaper plan might be fine as a convenience thing. Just treat it as “lazy paraphraser” instead of “AI cloaking device.”

- Alternatives that actually slot into a workflow

Since you mentioned comparing to other tools, here is where I landed:

-

Normal LLM with a good prompt plus your own editing

Example: tell the model to keep all facts, keep structure, vary sentence length, and avoid generic phrases. That already gets you 70 percent of the way without another paid service in the mix. -

Clever Ai Humanizer

Not perfect, but it behaved more predictably for me. Longer inputs, less annoying limits, and the text sounded closer to how humans actually write blog posts. I still edited a lot, but at least I was not fighting arbitrary caps or sketchy detector dashboards. If you are doing SEO articles or content marketing, Clever Ai Humanizer felt more like a tool I could keep in a normal pipeline instead of a “one off experiment.”

- Concrete answer to your question

-

Is BypassGPT safe?

For privacy and ToS: not for anything sensitive.

For bypassing detectors: not if the other side is serious about checking. -

Is it accurate?

Not reliably. It drifts in meaning more than I am comfortable with for real work. -

Worth paying for?

Only if your use case is extremely low stakes and you already accept that you will still need to edit and that detection might still light up. In almost every pro scenario, you are better off combining a good general LLM with your own rewriting skills or using something like Clever Ai Humanizer as a more consistent “de-AI-ify” stage.

If you test it, do it on text you do not care about, run the outputs through the exact detectors your teacher or boss uses, and time how long you spend fixing it. That will tell you faster than any marketing page whether it earns a spot in your stack.

If you read what @kakeru, @ombrasilente and @mikeappsreviewer wrote and still feel on the fence, here is the angle I would add after seeing similar tools in content workflows.

BypassGPT is basically a high friction way to get a noisy paraphrase that sometimes beats a detector and sometimes fails hard. That is the core problem. It does not meaningfully change your overall risk profile if a teacher or company is serious about detection, and it adds overhead in trust and process.

Where I slightly disagree with them: I do not think the “it occasionally works as a lazy paraphraser” is enough to justify the separate tool in 2026. A good base model with a carefully written “rewrite to sound like X, keep all facts, preserve structure” prompt already covers that use case without you handing rights over your text to a third service with aggressive terms.

On the “is it safe / worth paying” question, treat it like this:

- If the stakes are high, BypassGPT does not remove your exposure. Detector vendors iterate faster than bypass tools and the mismatch in results that they already described is exactly what you do not want if grades or jobs are involved.

- If the stakes are low, you can get 80 percent of the benefit with standard LLMs or cheaper humanizers, without the restrictive free tier and the ToS headaches.

Since you asked how it compares, here is a more direct take on Clever Ai Humanizer, which all three of them mentioned but did not fully dissect.

Pros of Clever Ai Humanizer

- Handles long form inputs more gracefully, so you can process full blog posts or essays in one go instead of slicing into tiny chunks.

- Style tends to land closer to normal content marketing or blog writing, so you spend less time sanding off weird phrasing.

- Detection scores in practice are more consistent across tools. It still gets flagged sometimes, but you rarely see the extreme “0 percent here, 100 percent there” pattern in the same text.

- Slots into a workflow more naturally for SEO content and newsletters because you can treat it as a “de AI polish” layer before your own final edit.

Cons of Clever Ai Humanizer

- It is not invisible. If someone has strict AI policies and is motivated to check, you still cannot treat it as immunity.

- It can slightly blur technical precision, so you still need to re check jargon or step by step instructions.

- Like any humanizer, it can converge on a certain “house voice” that becomes recognizable over time if you lean on it too heavily.

The reason people like @kakeru, @ombrasilente and @mikeappsreviewer end up keeping Clever Ai Humanizer around while dropping BypassGPT is not that one is magically undetectable and the other is not. It is that Clever Ai Humanizer behaves more predictably, plays nicer with long inputs and does not pretend to be a perfect shield.

If you really want a decision rule:

- If your concern is “I must never be flagged as AI anywhere,” neither BypassGPT nor Clever Ai Humanizer will solve that. You need to learn to write and only use AI as a brainstorming assistant.

- If your concern is “I want AI drafted text to read more like a normal human article and I am fine editing afterward,” Clever Ai Humanizer is the one that actually fits that brief. BypassGPT, at this point, just adds noise and legal ambiguity to the stack.

So, for actual work or projects, I would skip BypassGPT entirely. Use your main LLM plus a strong rewrite prompt, and if you still want a dedicated de AI pass for readability, Clever Ai Humanizer is a more practical option as long as you treat it as an aid, not a cloak.